AI agents have in a short time transitioned from experiments to everyday tools. They are already used by millions of people and businesses worldwide to automate workflows of various kinds. OpenClaw is the open agent platform that in just a few months has grown into one of the world's most talked-about AI projects.

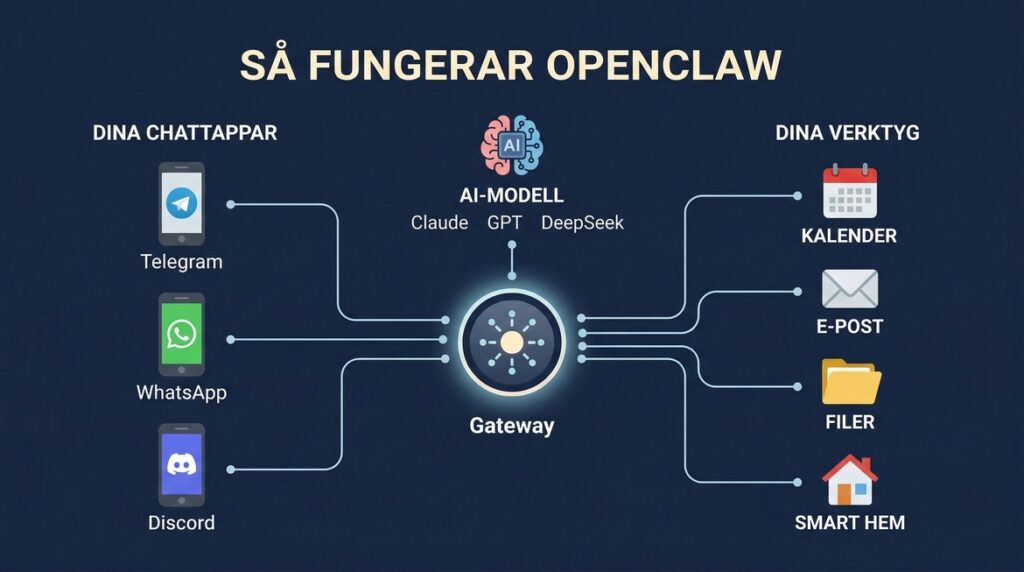

If you're not already familiar with OpenClaw, it can be briefly summarised: OpenClaw is a platform that connects your existing chat apps – Telegram, WhatsApp, Slack, Teams – to powerful AI models. The result is a personal AI assistant that not only answers questions, but actually performs tasks: summarising emails, booking meetings, monitoring systems and coordinating workflows. Around the clock, in the background, where you already work.

The threshold to get started is low. A single command line is enough to start an instance. But the threshold to do it safe – that's a completely different question.

Why security is not optional

An AI agent with access to your email, your documents, your API keys, and your chat channels is, by definition, a system with broad access. This means misconfiguration can have consequences far beyond the agent itself.

The risks are concrete. An agent that reads emails and documents could, if not properly restricted, inadvertently forward sensitive information to external services. An agent processing incoming content could be tricked into following hidden instructions without the user noticing – so-called prompt injection. If multiple people share an instance without clear permission boundaries, one user could potentially access another's data. And the rapidly growing ecosystem of plugins means not all components are equally scrutinised – security company Cisco has already identified problematic third-party plugins in the ClawHub library.

It's also important to clarify what ”local control” means in practice. The OpenClaw instance can be run on your own hardware, but the AI model itself – the part that does the heavy lifting – will in most cases be run by an external provider like Anthropic, OpenAI or Google via an API. This means that data is still sent outside of your infrastructure. Running a full-scale AI model locally requires specialised hardware at a cost that is unrealistic for most.

Just because of this, the configuration of which data being sent out, hur and it is handled who who has access to what such a central question – and precisely the kind of question that requires more than a standard installation.

Professional implementation makes the difference

Installing OpenClaw is one thing. Configuring it so that the right people have access to the right data, that the agent cannot be manipulated externally, that updates are rolled out continuously, and that everything works stably in real operation – that requires experience and systematic work.

We offer consultancy support throughout the entire process: needs analysis and solution design, installation and secure configuration, skills customisation, and ongoing operation and maintenance. This applies to both individuals who want a personal AI assistant with control over their data, and companies building agent-based workflows for teams or customers.

For many, it is precisely the professional implementation that determines whether OpenClaw becomes an asset in the business – or remains an experiment.

Ready to take the plunge?

Contact us at for help with deployment, configuration, maintenance and consulting support for OpenClaw – from initial idea to secure operation.

We offer consulting, implementation, and ongoing operational support for OpenClaw to both private individuals and companies who want to use AI agents in a secure and practical way.